Action Theory: when doing comes before knowing

In our idealised world, sufficient data is gathered before decisions are made. In reality, binding outcomes must be generated under uncertainty. This gap gives rise to a new discipline.

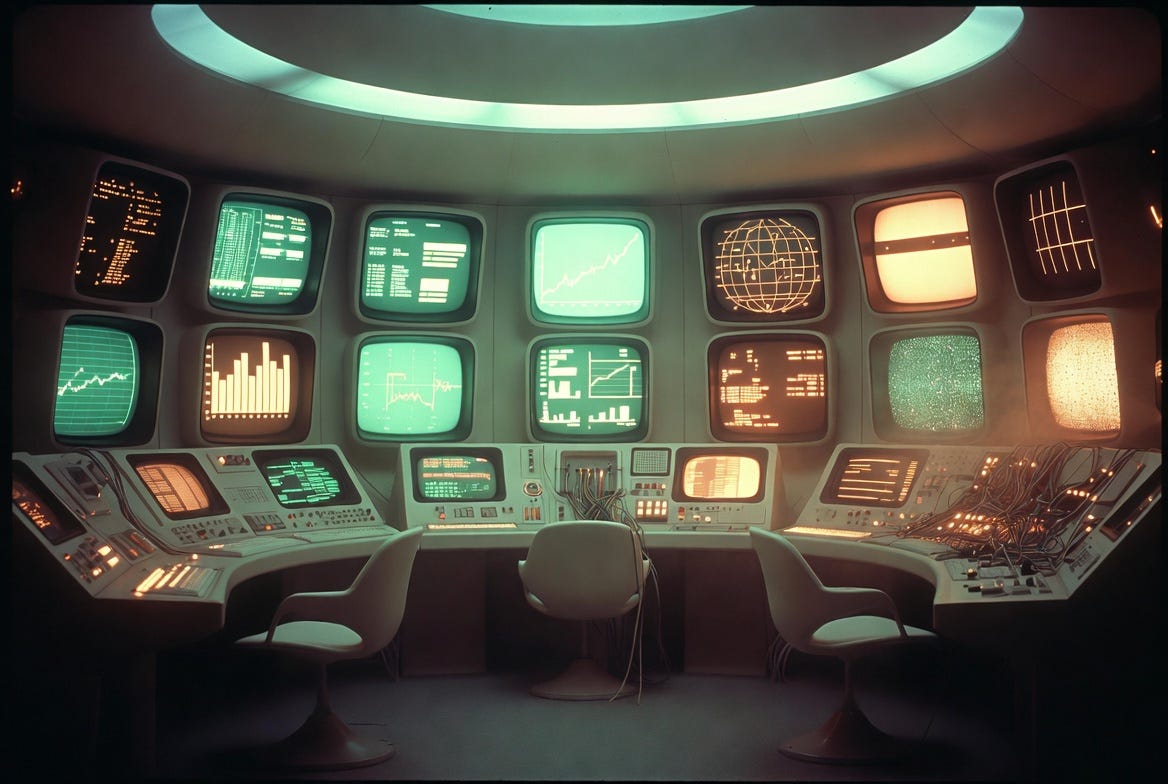

Picture, if you will, an operations room in a government facility. The furniture would not look out of place in 2001: A Space Odyssey. The carpet is that unmistakable 1970s green. In the background, telex machines and mainframe computers whir away. Bulbous, glowing screens display charts of economic activity.

This actually happened. Project Cybersyn was an effort by the left-wing government in Chile to model the economy centrally, using live feeds from industrial production. When anomalous variability was detected, government bureaucrats would be alerted, so they could—if necessary—take action.

The ambition was to apply cybernetics to real-time economic control. The theory was simple: given sufficient data, better decisions could be made, and sooner. The project was abandoned when the Pinochet military dictatorship took power. But the underlying assumptions remain with us:

Decisions follow information; and systems can wait until they “know enough” to act.

In practice, they cannot. Real systems must act before sufficient information becomes available. Yet modern management, shaped by information technology and data systems, sustains the illusion that decisions are grounded in complete and reliable knowledge. This creates a tension—between theories built on information and computation, and the practical necessity to decide and act now.

It is in this gap that a new discipline begins to emerge: Action Theory. Its central insight is a reversal of an unstated assumption, that we know before we do — i.e. that information is more primitive than action.

In many real-world systems, action is more primitive than information.

Whether a judge in court, a regulator reviewing a corporate filing, or a social media platform moderating content, the same assumption appears. Facts are gathered. Authority is established. A decision is made. An outcome follows. This is our default picture of how law, governance, and rational systems are supposed to work.

The same model operates in the workplace. Through 360-degree reviews, customer feedback, and supplier surveys, we believe we can establish a sufficient evidential base to decide who to hire and fire, what products to make, and which inputs to buy.

There is nothing wrong with informed decisions—but the model is incomplete.

In real systems, especially those exercising binding power, this sequence cannot always be maintained. The court cannot adjourn indefinitely. Case loads demand decisions now. The flow of flagged content is relentless. Under these conditions, action must precede complete information.

This is not a flaw, corruption, or incompetence. It is a structural feature of systems that cannot defer action. A debt collector must decide whether to clamp your car, even if you contest the claim. A school must decide whether to issue a detention, even if the evidence is incomplete.

This is not accidental: it follows from constraint. Systems operate under time pressure, the cost of gathering information, and the need for continuity. It is not that organisations do not want to fully ground their decisions—it is that they cannot. Outcomes must occur before ideal grounding is available. Not every demand for fully informed decision-making can be satisfied; the best you can do is make ruinous failure sufficiently rare.

This reflects work I was involved in as a telecoms scientist. Given an offered load to a data network—essentially a fixed supply of information-copying—some degree of quality attenuation is unavoidable. You can choose how it manifests: packet loss vs delay, and consequent re-prioritisation of flows. But you cannot eliminate it. Packets still have to be routed and scheduled under incomplete knowledge of their eventual impact on users.

Institutions face an analogous problem. They are presented with an offered load of decisions, but only a limited, time-bound capacity to ground those decisions in evidence and attribution. That load must be managed against the available supply of timely and reliable information. Some grounding is deferred, approximated, or lost entirely.

Action is not downstream of knowledge. It is forced under incomplete knowledge. Organisations are not consistently making “the right decisions”; they are making the least-wrong decisions available under constraint. In any decision system operating under load, a certain amount of attribution attenuation is unavoidable. All that can be done is for it to be routed and scheduled to where it does the least harm.

Once we see how decisions are actually made under constraint, a hidden structure begins to emerge. Every binding choice, however complex, rests on three simple questions:

What is this about?

Who can decide?

Why does it apply to me?

We can give these intuitive questions more formal names:

Determinacy — is there a defined object to decide over?

Attribution — who has the authority, and therefore the liability, for the decision?

Attachment — how does the decision bind this particular subject?

In an ideal system, all three are clear before action is taken. In real systems, they often are not.

The usual assumption is that bureaucracy is failing at its task of making good decisions. The deeper reality is different. Under pressure, systems cannot fully satisfy all three conditions at once. They must compromise them in order to maintain continuity.

In our opening Cybersyn example, the state attempted to ground macroeconomic decisions in live, comprehensive data feeds from industry. But in a more realistic view of decision-making under constraint, choices must be made under imperfect conditions:

When determinacy weakens, “what is this about?” becomes fuzzy

When attribution weakens, “who decides?” becomes abstract

When attachment weakens, “why me?” becomes assumed

Systems proceed with only partial answers to each of these questions:

Acts proceed with incomplete definitions of what their data even means. A flagged piece of online content may be classified as “harmful” or “misleading” without a stable or agreed definition.

They rely on abstract or class-level authority, rather than fine-grained assignment. Decisions are attributed to “the court”, “the regulator”, or “the platform”, without a clearly identifiable decision-maker responsible for the outcome.

The binding of the subject is assumed rather than demonstrated. A penalty is issued, access is restricted, or an obligation is imposed without a clearly identifiable moment at which the subject becomes bound.

The deeper implication is this: Cybersyn had the problem the wrong way round. The task is not to compute decisions from complete information. It is to determine when to stop searching for justification—and act anyway.

Once we accept that outcomes are produced first as a condition of institutional survival under load, a simple corollary follows: if action comes first, explanation must come later.

Grounding for any individual decision is often reconstructed after the fact—from fragmented records in disparate systems, approximated from related data, or replaced with procedural audit trails that infer what must have occurred.

This is not a mistake or a malfunction. Post hoc justification is a necessary consequence of an action-first system. What appears as evasiveness—substituting procedural answers for substantive reasoning—is often structural. The opacity is not chosen; it arises from how authority is applied under constraint.

This pattern is not confined to any one domain. Courts, regulators, corporate HR, and safety-critical systems all operate under the same conditions. Acting under uncertainty is universal. Coercive, binding action under uncertainty is simply the case where the underlying structure becomes most visible.

Each of the elements described so far is, to some degree, already recognised in isolation. What is missing is a unifying account. Existing fields—political science, management theory, organisational design—rest on assumptions about the relationship between information and action that are rarely made explicit, and often do not hold under real-world constraints.

What is absent is a formal discipline that begins from those constraints rather than idealised conditions.

My own background is in computer science, where two foundational assumptions are deeply embedded:

Information is available (information theory)

Computation can complete (computability theory)

The gap is what happens when neither assumption holds—yet action is still required.

One might call this “informationless theory”, but that frames the problem negatively. A more constructive formulation is Action Theory:

the study of how systems terminate decisions and produce binding outcomes under conditions where complete information and full computation are unavailable.

This is not just a metaphor. It points towards a systems science, with its own invariants, constraints, and limiting behaviour. It describes the extent of what is possible—and the trade-offs that must be made—when action cannot be deferred.

To make this concrete, return to the earlier analogy with packet-switched data networks. These systems operate under offered load, with a limited capacity to copy and move information. As demand increases, they cannot preserve perfect service for all traffic: instant, reliable copying of every packet. Quality must attenuate.

In this setting, there are three variables: load, loss, and delay. But only two are independent degrees of freedom. Once any two are fixed, the third is determined. If load rises, the system must absorb it through increased delay, increased loss, or a combination of the two. There is no third channel through which the excess demand can disappear.

Decision-making systems exhibit a closely related structure. In the triad introduced earlier:

Determinacy — a defined object over which a decision is made

Attribution — the constructor of authority for that decision

Attachment — the instantiation of that authority so as to bind the individual

The analogue of quality attenuation is attribution attenuation. It is governed by two degrees of freedom:

Constructor fidelity — how far the decision can be traced back to an identifiable originator, decision-maker, or responsible constructor. Who actually made or authored this?

Instantiation traceability — whether there is a discrete, record-identifiable event that binds the subject in the particular case. What act made this apply to me?

As constraints tighten, grounding cannot remain complete. It attenuates along these two axes: loss of constructor fidelity, loss of instantiation traceability, or some combination of the two. As in networks, the system cannot avoid degradation; it can only determine how it is distributed. In practice, systems tend to preserve instantiation traces—records that something happened—longer than they preserve clear attribution of who made it and why.

Action Theory is, in part, the formal study of this structure: the degrees of freedom along which grounding attenuates, and the limits that govern how systems continue to act when ideal conditions cannot be maintained. The purpose here is not to present the whole discipline, but to demonstrate the existence of an untapped formal domain.

This framework is not a matter of philosophy or opinion. It describes a structure: the layers from which authority is composed, and how they degrade under load. It reflects the constraints that real systems operate under, and the mechanics and dynamics that follow from them. It explains why systems do not wait for perfect grounding: because they cannot.

Its use is primarily diagnostic, not prescriptive. It makes visible the gap between action and justification that emerges as decision systems are placed under stress. In this sense, Action Theory is closer to a safety-critical systems discipline than to conventional management theory.

As a result, its applicability is cross-domain. Wherever decisions must be made under constraint—and especially where those decisions bind others—the same structure and dynamics are in play.

This implies a rethinking of how we understand legitimacy, and how we interpret error in that context. The traditional view is that mistakes in decision-making reflect some form of misconduct. That is sometimes true. But this framework allows for a more mundane explanation: many apparent “errors” are structural consequences of forced action before full information is available.

It invites a different kind of question, one that does not begin with moral judgement. A glitchy internet video call is not a moral failure, but an engineering one. The same perspective can be applied here. Rather than asking whether a decision was simply “wrong”, we can ask where its grounding was incomplete, what was assumed rather than evidenced, and which step in the stack failed to close the loop.

What Action Theory offers is a new lens.

Decision systems do not wait for the world to be fully known, defined, and justified before they act. They act first, and then reconstruct the appearance of completeness afterwards.

This is not a critique of any particular institution, nor an accusation of failure. It is a description of how such systems behave when they are required to act under constraint.

The implication is not that such systems should stop acting, but that we should better understand the conditions under which they operate. We do not condemn construction as an industry because some buildings collapse; we seek to understand the conditions under which failure occurs.

The next step is to model and engineer these load and failure dynamics explicitly in decision-making systems, rather than continue to assume that the ideal order—knowledge first, action second—can always be maintained.

"binding outcomes must be generated under uncertainty." I disagree. The systems must be rebuilt to provide the judicial function that we need and that we pay for. Enough of running the money out the back door and leaving the people with judicial-like processed fakery.

Martin, I find this quite interesting. Two ideas.

First, this is how humans actually act for the most part: They take an action and then justify that action. Some of the justifications can be quite divorced from the actual internal reasoning that governed that specific decision, sometimes hilariously so.

Second, it might be useful to add in the idea from medical testing, where decisions have to be made for a test to tune it - Correct positives and correct negatives are the desired outcomes, but every test generates false positives and false negatives. In Action Theory, I suggest that in any particular system, there is a tension that leads to one of the two anchoring criteria being automatically more critical, and explicit design choices need to be made when building a system such that the cost of either the false positive or the false negative is weighed and the system adjusted to minimize the cost of failure over many instances by avoiding the more costly failure mode more often than avoiding the less costly failure mode.

If you are dealing with a system of law or an enforcement process, which failure mode is less costly or less troublesome and which failure mode is more consequential? For example, when a legal system lets go of attribution to enhance instantiation, is that better or worse for society than the reverse? Which loss is of greater consequence? Should that potential loss be minimized by revising the statute that creates the system in question, or is it more likely to require the addition of an auditing system that gets bolted onto the operational process?

And who makes that choice of what is more important or less important? And does this analysis of failure mode costs show that the system can operate within acceptable cost or expose from the start that one or both failure modes are actually so damaging that the system itself should not be used?

In the UK hundreds of years of jurisprudence and process has chosen to put into place specific requirements of attribution and instantiation (etc.), none of which allow for the failure modes inherent in the Simple Justice Procedure, because doing so in the SJP automagically destroys the ability to create and carry out true justice. So judging the SJP after the fact of institutionalization will actually not get us to the prior and more important decision as to whether the SJP can ever provide true Justice when its system is designed from the start to break the protections against failure modes that are built into other justice systems that protect strict attribution and strict instantiation at the cost of an inability to handle all the violations.

Perhaps the problem is actually that there are too many laws creating too many ways to commit a crime or a violation, and insisting on maintaining strict attribution and strict instantiation is the discipline that exposes that untenable and authoritarian failure.