Civilisation Attenuation and Synthetic Success

A category error at the heart of modern governance theory risks something worse than collapse: manufactured continuity that silently consumes other forms of adaptability, intelligibility and stability

When I branched out from telecoms into writing about information warfare, propaganda, and military intelligence programs, I never imagined I would eventually end up back at exactly the same architectural problems I had been working on in data networking. Yet governments and packet networks turn out to be two examples of the same deeper phenomenon: large distributed structures that must make decisions locally, without seeing the whole picture, while under pressure to keep functioning despite overload, uncertainty, and resource conflict.

Once you see that, it becomes much less surprising that the same misconceptions, failure modes, and compensating tricks repeatedly emerge in both domains. In particular, the key protocol underpinning today’s Internet, TCP/IP, pulls the same stunt as legal positivism: it prioritises one form of operational continuity — local packet forwarding and local decision closure — over almost every other form. The machinery keeps running, but the ability to reconstruct exactly why it runs, under whose authority, and toward what coherent purpose becomes increasingly fogged out.

This creates long-term governance problems, slowly arming a ruin risk for society.

In this essay, I draw parallels between a reframe of data networking — “quality attenuation” — and a reframe of governance:

“civilisation attenuation”.

In telecoms, networks are sub-optimised because they are unconsciously treated as if they “do computing work” — as in net-work. More throughput is assumed to mean more useful activity at the global scale. But packet networks do not manufacture value that way; they imperfectly preserve informational continuity under conditions of load and contention. The difference does matter.

Modern governance theory makes a strikingly similar mistake, but for lawful authority rather than computing. Institutions are often treated as engines of justice, truth, legitimacy, and rational administration. But under pressure, they increasingly function as something else entirely:

distributed adjudication forced to terminate ambiguity under conditions of partial observability and bounded reconstructability.

Structurally, that is the same problem a network router is solving. And just as Internet architecture accumulates hidden fragility when it optimises for packet forwarding (i.e. “work now!”) above all else, civilisations accumulate hidden governance fragility when operational continuity (“govern now!”) is preserved at the expense of what is real and defensible.

The machinery still runs, but lawful intelligibility progressively degrades beneath it.

Before delving further into the argument, I should note that readers often struggle to locate the layer at which I am operating.

They are personally experiencing bureaucratic malfunction inside institutions that appear rigged against them — perhaps unconstitutional, procedurally corrupted, or even outright criminal. As a result, they often interpret what I am writing through familiar political lenses: a UK-centric view of legality, indifference to justice, technocratic apologetics, or institutional critique.

But the layer I am describing sits beneath all of those — just as I once worked in telecoms at a level below almost all other engineers.

Many inter-network designs are theoretically conceivable, but not all can be feasibly implemented. There are cosmic, ludic, and ecological constraints on any architecture: the underlying physics, the rules of the game being played, and the terrain through which the game unfolds. My specialism was the game itself — for example, packet scheduling under load. Only a finite set of moves is available. Some aspirations are structurally unattainable, regardless of competence, goodwill, or ideology.

No doctrine was involved; these were hard mathematical and architectural limits.

I am making the same kind of argument here. The framework applies equally to liberal democracy, Marxism, techno-fascism, libertarian idealism, anarcho-capitalism, and every other large-scale coordination structure. It constrains not merely the policies that can be chosen, but the kinds of governance games that can be played at all. I am not advocating for a particular form of government. I am describing the hidden trade-offs between demand and supply for reconstructable legitimacy, and the structural limits they impose.

Beneath every civilisation lies a largely invisible game of reconstructable authority. It always exists, yet modern governance theory rarely describes it clearly.

In order to locate what I am saying, it may help to return to the “ghost court” question I have been exploring over the last two years.

The issue is not simply that the court name on the summons fails to map neatly onto a court defined in law, nor that the naming convention falls short of some minimum formal standard. The problem is subtler than that. When you take the dozens of different names, variants, references, and claims to authority — scattered across multiple categories of object and documentation — they do not resolve cleanly back to a single determinate authority object described in law.

The failure is one of lawful attribution, not administrative nominalism.

Ordinarily, we compress attributable chains of authority all the time. You do not expect a judge to produce their certificate of appointment in every hearing, or a courthouse to prove that it has been duly designated as a lawful venue for proceedings. You routinely pay invoices without demanding the incorporation certificate of the company or proof that the bank account genuinely belongs to them. Civilisation depends upon compressing these chains of reconstructability. Without such compression, social coordination would become impossibly expensive.

What this illustrates is the characteristic shortcut increasingly taken by modern governance to preserve operational continuity under scale. Reconstructable continuity is sacrificed: the ability to say clearly who acted, on what basis, under whose authority, and with what reciprocal accountability. Decisions still bind the public, but the reverse routing of liability and attributable agency progressively disappears into the mists of the machine.

This is not primarily a moral failure, ideological conspiracy, or technological accident. It is a recurring structural response to scaling pressure acting upon finite reconstructive capacity.

A similar shortcut phenomenon happens in telecoms networks. Packet scheduling systems attempt to infer the needs of different traffic flows so they can be treated appropriately under contention. The absence of explicit and trustworthy classes of service makes this extraordinarily difficult; proxies are substituted for expressed need. Given any offered load to a network, all the system can really do is — excuse my crudeness — decide who gets hit with the shit as best it can, based on what can be inferred.

Packet loss and delay cannot be made to disappear; they can only be redistributed across data flows in ways intended to minimise overall quality of experience damage and end user disappointment. The ideal network performs instant and perfect data replication at a distance. No such network exists; all are degradations from the ideal. The paradoxical reframe is that networks do not positively “do work” — despite the clue in net-work — but instead exhibit a fundamentally negative observable property:

the attenuation of quality from the ideal, manifested as packet loss and delay.

A comparable reframe applies to governance. The ideal is perfect traceability and attribution of all decisions back to their originating authority, evidence, and lawful basis. No real civilisation achieves this level of reconstructability. In practice, there is always a finite capacity to justify decisions, preserve attribution chains, and maintain reciprocal intelligibility under scale.

Civilisation depends upon the maintainability of reconstructible attribution of authority. Civilisation attenuation is what happens when operational continuity is preserved despite the progressive weakening of those reconstructive foundations. Like packet loss in a network, it cannot be eliminated — only displaced, deferred, and managed.

What we are observing are the constraint mechanics of a broader class of large-scale distributed structures. Their defining properties are:

Rising coordination load — the increasing volume and complexity of information that must be combined to make decisions.

Finite attribution capacity — limited ability to trace decisions, claims, and authority back to reconstructable origins.

Mandatory closure pressure — the need to keep moving, deciding, routing, and enforcing even when attribution remains incomplete.

The rest of this essay explores the resulting drift toward what this framework calls synthetic continuity, synthetic success, and synthetic governance. Taken together, these produce civilisation attenuation:

the gradual loss of the conditions under which society remains intelligible, attributable, trustworthy, adaptable, and safe.

The danger is not immediate collapse. Quite the opposite.

The danger is operationally successful unreality.

It feels like this ought to be a social media meme:

“one secret trick they teach at law school”.

But thankfully the world is not quite that simple. There is a kind of trick, but it is usually implicit and, under many circumstances, entirely legitimate.

We have already seen how authority and explanation are routinely compressed: shortened trust chains, “ghost” entities that stand in for the true source, and institutions that continue enforcing decisions even when the originating chain of authority is no longer fully reconstructable.

Now we can define the mechanism more explicitly.

Civilisation is that which never has a day off. It has to persist and reproduce itself every moment of every day. Its defining characteristic is continuity. Unlike packet data, however, decisions cannot simply be “dropped”. Society must keep moving. Claims must be resolved. Enforcement must continue. Delay itself is finite; eventually something has to give under pressure.

The compromise increasingly taken by modern governance is this:

civilisation preserves operational continuity by projecting synthetic continuity after reconstructive grounding weakens.

Let us unpack that using a concrete example. In my own “ghost court” saga, the court itself became progressively fuzzy as an attributable legal object, while no actual court order appeared to exist. This is a loss of ontological continuity: uncertainty about what exactly the governing object even is.

Later, debt enforcement continued despite the matter being under judicial review, in apparent contradiction of the governing procedures themselves. This is a loss of epistemic continuity: uncertainty about whether the system still meaningfully knows and applies its own rules.

Yet the machinery continued to operate.

The state still pursued the debt — operational continuity — while progressively relaxing requirements for other forms of continuity: stable authority objects, attributable procedures, reconstructable justification. Continuity was operationally projected and protected while ontology and epistemology drifted underneath.

The coercion remained entirely real — somebody could still come and take my car away — but the reconstructable chain of authority and attribution had materially degraded.

The phenomenon is hardly unique to ghost courts or motoring cases. Platform moderation systems, algorithmic reputation scores, automated benefit sanctions, procedural banking exclusions, AI-generated decisions — many people have already experienced this attenuation of ontological and epistemic grounding, even if they lacked the language to describe it clearly.

This “one trick” is synthetic governance.

It is not fake governance; the actors, incentives, coercion, and consequences are all genuine. Rather, it is governance whose operational continuity outruns reconstructive intelligibility.

That is the important distinction:

Large-scale coordination systems cannot simultaneously maximise operational continuity, reconstructability, attribution, and throughput under rising load.

I learned something new about telecoms yesterday from my geopolitical work.

In the past I wrote extensively about RINA — Recursive InterNetwork Architecture. It is slightly misnamed, as it is neither a protocol nor really an architecture, but more of a design pattern. It describes the underlying recursive structure of distributed computing with minimal coupling and maximum cohesion — in other words, the greatest “bang for your network state buck”. Despite major technical advantages over TCP/IP, it has largely remained confined to niche research communities.

The revelation was that RINA is not fundamentally solving a technical problem, but a governance one.

The current Internet optimises around a single overriding objective: forward any packet anywhere. But not all packets are desirable packets, nor should all packets go everywhere; they need to be governed differently. As a result, TCP/IP and its surrounding ecosystem repeatedly reinvent separate mechanisms at different scales for privacy, identity, security, payments, age restrictions, copyright enforcement, trust, and access control.

There is no universal equivalent of a private local address like “192.168.0.1” operating recursively across scales, so each layer compensates with its own bolt-on governance machinery: login systems, moderation policies, certificate authorities, OAuth flows, ad-tech profiling, platform identities, KYC systems, content filtering, and endless procedural glue from the IETF.

TCP/IP preserves its own operational continuity while displacing almost every other governance problem outward into adjacent layers. Those layers then become increasingly complex, costly, opaque, and ineffective.

RINA takes the opposite approach. It preserves core architectural invariants recursively across scales. Its real advantage is not merely that it is technically cleaner than TCP/IP — though it radically simplifies networking itself — but that it dramatically reduces the surrounding scaffolding, duct tape, and synthetic governance required to make the wider digital ecosystem function.

That was the “aha!” moment.

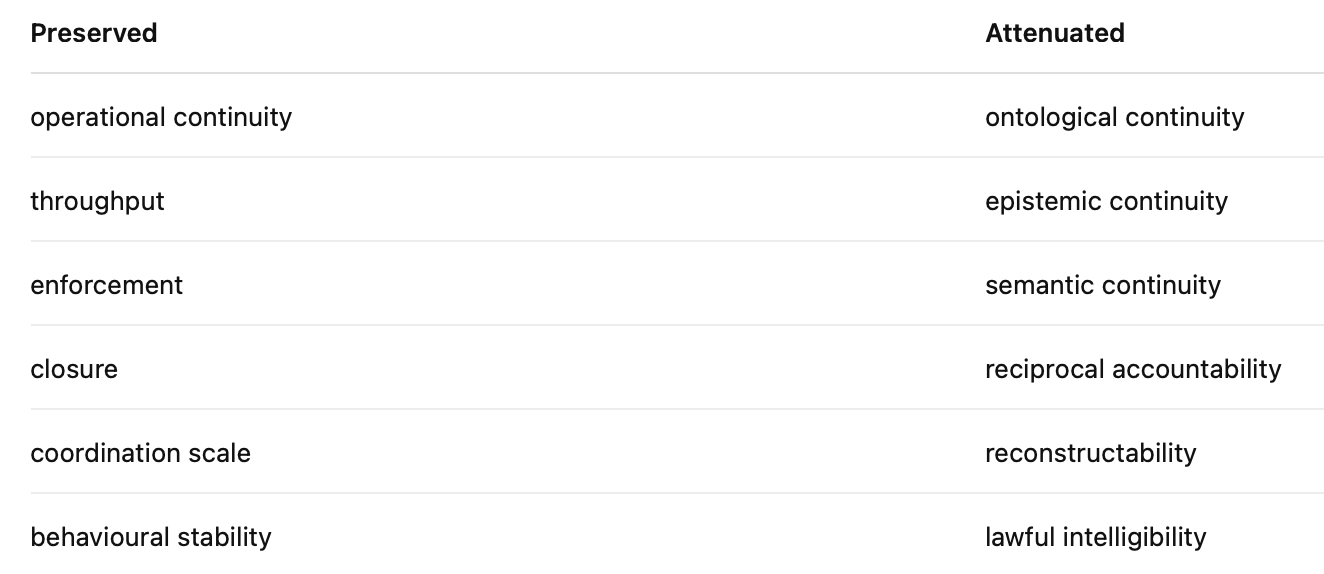

There are many forms of continuity inside any civilisation-scale coordination structure. Optimising aggressively for one alone can silently degrade all the others. What appears locally optimal may become globally pessimal.

Now comes the intellectual payoff:

there are many forms of continuity underpinning governance, not just operational.

Any large-scale coordination architecture will necessarily preserve some kinds while attenuating others. There are trade-offs. We cannot maximise everything simultaneously.

The descent path is always structurally similar:

Any governance structure experiences load from its users: applications, claims, disputes, appeals, transactions, enforcement demands.

Under pressure, it must preserve operational continuity — decisions still have to be made, institutions still have to function, enforcement still has to occur.

In order to maintain that continuity at scale, other properties progressively attenuate: reconstructability, attribution, semantic fidelity, reciprocal accountability.

It is simply “the result of the equations” in the same way that a passing lorry deforms a suspension bridge. It is neither good nor bad, but the natural consequence of static and dynamic forces acting upon the structure.

The deeper problem emerges one layer further down.

Eventually the bureaucracy loses corrigibility itself. The system can no longer reliably detect that it has lost ontological and epistemic grounding, because the mechanisms required to recognise the drift have already attenuated. The organism is blind to its own lack of grounding. It can’t tell it is “out of coverage” from truth.

There is no obvious error signal. From inside the process, nothing appears wrong.

The machinery still functions. Decisions still execute. Enforcement still occurs. Yet the underlying relationship between authority, attribution, meaning, and reality becomes progressively less reconstructable.

That is the essence of synthetic success.

If you are a law professor or political science lecturer, you may wish to sit down somewhere quiet before reading the next part, because I have something to tell you that could prove rather unsettling.

I have already explained how packet networking rests upon a category error: networks do not “do work”. Once this is understood, entirely different engineering possibilities emerge. Predictable performance technologies become possible that conventional Internet thinking struggles even to conceptualise. Networks can be run at over 100% offered load, and the excess demand shed with engineering precision. There is therefore a witness to the claim. The reframe is not merely rhetorical; it has operational consequences.

Now we can crystallise the equivalent category error in governance:

governing activity does not inherently generate lawful authority.

Modern governance theory routinely mistakes procedural persistence for lawful continuity. The implicit assumption is that if institutions continued operating and recognised procedures were followed, then the resulting decisions remain reconstructively legitimate.

That assumption only holds inside a limited and predictable region of operation.

It does not describe the failure modes in overload for proof of legitimacy demand.

The same hidden premise appears across legal positivism, utopian technocracy, administrative proceduralism, AI governance optimism, and digital platform governance. At relatively low attribution loads, where reconstructive grounding remains socially recoverable — “call the contact centre to ask why” — the simplification works reasonably well.

But as coordination load rises and systems come under pressure, operational continuity is increasingly preserved by attenuating other forms of continuity instead: reconstructability, attribution, semantic fidelity, reciprocal accountability.

The result is a progressive loss of ontological and epistemic grounding beneath an apparently functioning procedural surface.

In other words, reality begins to blur and disappear as synthetic success takes over.

This is not to say that procedures are useless. The point is subtler and more disturbing than that.

Procedures become unstable when detached from reconstructive reciprocity.

Merely being able to demonstrate that something happened is not the same thing as being able to reconstruct and justify the authority under which it happened.

Or more bluntly:

just because the machinery ran does not mean it remained lawfully intelligible.

We are now in a position to diagnose the “rot” in how legal positivism increasingly dominates administrative thinking. The naive assumption is that because Assembly, Congress, or Parliament assented to a governance procedure, lawful authority automatically follows from its continued operation. But this skips over the essential point:

law is, by definition, reconstructable and attributable authority.

What has happened instead is that a necessary and ordinarily legitimate shortcut has been taken — no different from an invoice arriving from a corporation without an attached copy of its certificate of incorporation. The company name itself stands in for the wider authority structure behind it. If uncertainty arises, the attribution chain can still be reconstructed through registries, officers, filings, and courts.

Legal positivism implicitly tolerates compressed attribution on the assumption that full reconstructability ultimately remains available when challenged.

My own “ghost court” litigation suggests that this assumption can fail. More significantly, the state not only struggles to reconstruct the originating authority cleanly, but increasingly resists the proposition that such reconstruction is even required. We have moved outside the predictable region in which the doctrine remains stable.

That is when it becomes dangerous, as the foundational requirements for rule-of-law are being undermined.

Legal positivism functions coherently only while:

ontological continuity remains stable — so “the court” remains a consistent and intelligible authority object,

attribution chains remain socially recoverable — so the authority behind decisions can still be traced and verified manually if need be,

reconstructive reciprocity survives — so the citizen retains symmetrical access to challenge and reconstruction mechanisms.

We saw a structurally similar phenomenon in my TV Licensing litigation. “TV Licensing” functions operationally as if it were a legal entity, despite actually being a trademark standing in for shifting institutional arrangements involving the BBC, Capita, and associated governance structures. Correspondence arrives from “TV Licensing”, enforcement proceeds under that identity, yet attempts to treat the entity itself as legally attributable collapse into procedural ambiguity.

This illustrates the deeper point: once attenuation of reconstructability progresses sufficiently,

procedural validity ceases to guarantee lawful intelligibility.

By “lawful intelligibility” I mean the ability of an ordinary person to reconstruct who acted, under what authority, according to which rules, and with what reciprocal accountability (so I know who to sue if it goes wrong).

That is not an attack on law; it is closer to thermodynamic limits on law.

It is a statement about the scaling limits of compressed authority under finite reconstructive capacity.

When I was at school, works like George Orwell’s Animal Farm were presented as warning tales about totalitarianism and tyranny. Such dangers were framed as either historical — Nazi Germany — or geographically distant: the Soviet Union, Maoist China, and similar regimes. This is not to diminish the horrors of overt despotism. Only to note that it is not the only form of domination available to civilisation-scale systems.

I want to suggest something more disturbing that sits outside these conventional analyses.

Synthetic governance may ultimately prove more dangerous than tyranny.

In the classical form of tyranny, the inversion remains visible. Coercion is attributable: secret police, political prisons, blacklists, camps, disappearances. It is localisable and phenomenologically real. Dissidents know what they are resisting.

Synthetic governance is different. It is diffuse, procedural, ambient, distributed, and operationally normalised. Most of all, it is boring — ask me how I know. It arrives as workflows, routing systems, policy notices, moderation queues, algorithmic scoring, automated refusals, procedural dead ends, and endless administrative fog.

Classical tyranny preserves visible coercive ontology.

Synthetic governance progressively attenuates ontology itself.

Power becomes difficult to locate, difficult to challenge, and increasingly difficult even to describe coherently. The citizen no longer encounters an attributable authority so much as an operational environment. AI outputs, procedural routing, unaccountable policies, institutional abstractions, and ambient belief in “the system” gradually displace any meaningful expectation of reconstructable authority.

In such an environment, domination no longer depends primarily upon overt violence. Workflow momentum and psychological normalisation become sufficient to maintain compliance.

The eventual result is a bureaucracy experienced as unreal, untrustworthy, and opaque. These are not conditions under which legitimacy, peace, or long-term stability naturally flourish.

This is not the first time in history that civilisations have encountered the problem of synthetic success and attenuation. The later Roman Empire and the tragicomic bureaucracy of the Soviet Union both illustrate how legitimacy can progressively hollow out even while state machinery continues functioning on the surface.

The important point is that the dynamics described here represent a recurring structural equilibrium under scaling pressure. Similar dynamics have likely appeared throughout history wherever large coordination structures exceeded their capacity for reconstructable attribution. Presumably they would emerge in any sufficiently large non-human civilisation too.

The phenomenon is not fundamentally ideological or conspiratorial.

It is a consequence of finite reconstructive capacity.

The state does not necessarily conspire against the citizen. Rather, it increasingly cannot scale reconstructable attribution quickly or cheaply enough to match coordination demand — at least not without preserving deeper architectural invariants of the kind explored in recursive systems such as RINA.

The claim being made here is, in a sense, quite modest. Under sustained pressure and finite resources, bureaucracies naturally preserve operational continuity first. In doing so, they progressively relax requirements for stable governance objects (“ghost courts”), attributable procedures (“enforcement without orders”), and reconstructable chains of authority.

The machinery continues to function, but the basis on which it remains lawful, intelligible, and corrigible progressively degrades beneath it.

Civilisation attenuates under attribution load.

The timing of this insight is particularly apposite. AI now allows ordinary people to generate complaints, freedom of information requests, data subject access requests, legal submissions, and institutional challenges at unprecedented scale, speed, and sophistication. Machine learning dramatically expands the operational load that the public can apply to bureaucratic machinery.

At the same time, AI can equally be used to sustain ever greater operational closure, abstraction, procedural routing, and semantic substitution. Institutions equipped with automated customer service agents, workflow engines, and generative systems can increasingly evade demands for attribution, reconstruction, and accountable authority while still maintaining the appearance of responsiveness.

An AI civilisation may therefore become operationally superhuman — on both the supply and demand sides of governance — while remaining recursively non-corrigible. AI may massively amplify synthetic governance equilibria, amplifying synthetic continuity faster than humans can reconstruct attribution.

That’s a scary prospect.

Scaling operational continuity without an equivalent reconstructive architecture only accelerates civilisation attenuation. In effect, we risk building a “TCP/IP civilisation”: one optimised for throughput and continuity projection while displacing governance complexity into ever larger layers of synthetic abstraction and procedural glue.

The deeper lesson from recursive architectures such as RINA is not merely technical. It is that preserving reconstructability and governance invariants across scales may ultimately matter more than optimising any single operational objective in isolation.

I greatly enjoyed reading Dmitry Orlov’s Reinventing Collapse many years ago. Those who survived the Soviet collapse often carry deep wisdom about how to endure institutional upheaval and live meaningfully amidst disorder. What I have gradually come to understand, however, is that collapse itself may not be civilisation’s greatest danger.

The greater danger may be an operationally successful synthetic civilisation — one that increasingly resembles a surreal administrative video game. The synthetic entities it spawns progressively lose the reciprocal intelligibility that makes society legible and navigable. I instinctively knew that receiving a summons bearing a court name I could not meaningfully reconstruct was a problem. It has simply taken me 18 months to understand why.

Reciprocal intelligibility is a precondition for lawful self-correction. Without shared ontological and epistemic grounding, the machinery simply rolls onward — “computer says yes” — while the possibility of challenge progressively disappears. Over time, civilisation can continue functioning while losing the ability to reconstruct what exactly is governing it, under whose authority, and according to which reality.

The endpoint of civilisation attenuation is not necessarily tyranny.

It is the progressive loss of meaningfulness and reconstructable reality — beneath an apparently functioning procedural surface.

Which arguably is worse for the human condition.

Ooooh, this is wonderful. I have a few thoughts.

Ilya Prigogine investigated how physical systems reorganize themselves as energy in the system rises. The system reaches a point where it could go one of two ways, to reorganize at a higher level able to process more energy easily, or chaotic disorganization. When we look at mercantilism or central planning, what we find is that the system gets overwhelmed by a rising level of activity. What happens is that the system as it is, essentially breaks and becomes reorganized at a higher/deeper level, in this case a market economy where prices provide better communication at greater efficiency. Prices operate at all levels, so perhaps it is the economic equivalent of RINA versus the TCP/IP of central planning.

At the current time, the world is experiencing rising activity levels. The market pricing system appears to be able to handle that rise on the economic and finance side, but the governance side of the civilizational "network" is failing.

Here's a thought: What is needed is something easily audited, where all the elements of any transaction can be traced all the way back to the source event. That sounds like blockchains. Very fast throughput, easily audited by the public, and fully applicable to governance activities at any level.

Properly set up, with public sourced code, and with proper ways to produce the desired information (the UI), any citizen can audit any aspect of the government's flow of money (theoretically). If properly set up, it would seem that a blockchain is capable of maintaining object relevance quite easily, but it requires that government open its systems up to the citizens.

Another aspect of the situation is that the large superstructure required for central planning went largely away when the price mechanism was allowed to operate with only general guidelines, thus hugely reducing the need for a government bureaucracy to administer an economy. Likewise, it may be possible to reconstruct the Law side of our civilization by creating an observable, auditable "price-mechanism" equivalent, thus allowing the government to shrink again. And of course, the lower down in the civilizational structure you push decisions, the smaller the central government needs to be. I suspect that one outcome of this process that you see, Martin, of a synthetic civilization, may end up being dealt with through competitive pressures at the level of governance that force central government to simplify and focus on only what it must do. I think Trump and his crew are in the process of applying these various ideas and strictures in what will likely become an enormous increase in auditability at the same time as the higher levels of our government are forced to shrink and push decisions lower, where auditability is easier.

The rising pressure you are seeing in the "Law" side of our civilization will force us onto either the chaotic low-energy system of a synthetic civilization or onto a path where systems that provide easy object attribution open up government and force power down to the level it is most applicable to.

I don't know about you, but I suspect that we are in agreement in hoping we end up on the latter line (though I'm sure it will have its own problems we will need to iron out).