Crushed by the algorithm

How X stopped being a social network and became an attention auction system

You should not be reading this article.

No, seriously, stop now.

You should not be reading these words.

You are still reading, aren’t you? Oh well, I did warn you.

If you are still reading this, you need to ask yourself why.

Was it your genuine considered choice?

Did someone choose to share it with you?

Or did a machine decide you were likely to react to it?

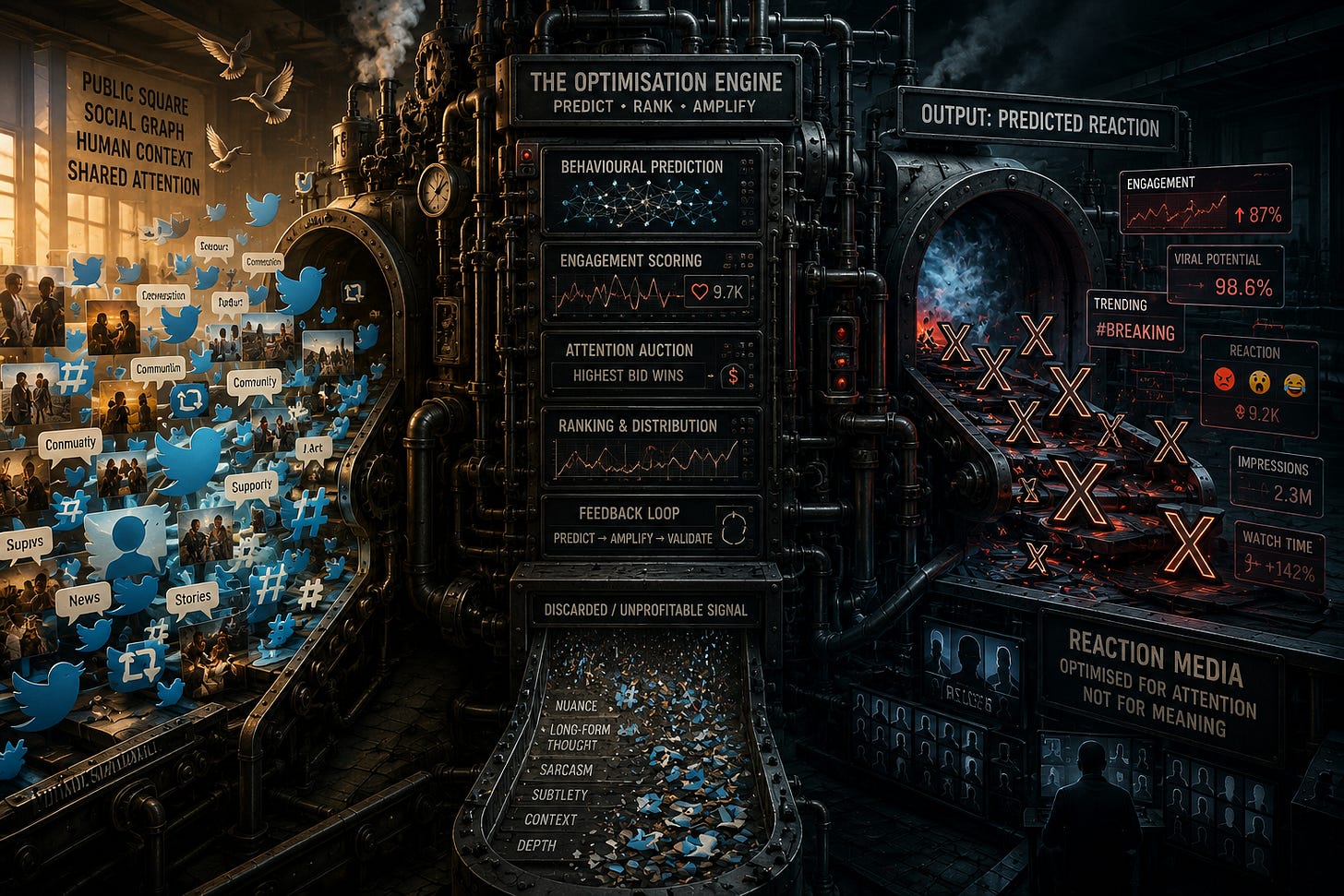

It is that distinction that I want to apply to the transition of social media into something else — Twitter and X are not the same product. The interface stayed familiar, but the social contract and definition of signal changed underneath us.

Social media was stealthily replaced by reaction media.

Human judgement was replaced by machine prediction.

I hope this essay puts words to what many are feeling.

Twitter was built on a simple unwritten agreement, in three parts:

You bring your voice, attention, time, humour, relationships, and creativity.

The platform lets you reach people who explicitly choose to follow you, subject to light anti-spam and anti-abuse filtering.

Your audience will grow in proportion to your sustained effort and content value, with followers as the proxy metric for your contribution and success.

At its heart was a human-defined signal:

Follows and unfollows as a value judgement of expected utility

Mute and block as anti-signal to remove noise

Hashtags as human indexing to navigate complexity and discover new flows

Retweets as crude and lightweight social endorsement

The crux is this: humans evaluated the offered signal and routed it socially. The platform mostly routed it at a purely technical level.

There was always going to be an algorithm — we don’t need to indulge in any naïve absolutism about an era of purity that never existed.

Spam is real, abuse is real, and low-value noise is everywhere. Moderation costs do matter, and automation is necessary. Machine learning was inevitable as a solution.

That said, there is a difference between removing noise and deciding what counts as signal in the first place.

Twitter’s algorithm mostly cleaned the stream.

X’s algorithm increasingly defines the stream.

This is not just a change in the degree of algorithmic curation; it has become a qualitatively different beast as a result.

For a long time, Twitter was seen as the digital town square. The metaphor is apt, as many of the properties of the physical commons did translate over:

A genuine town square makes all present visible to everyone else.

We have stable identities — our faces and bodies — that others can relate to, and that give social continuity.

We see who associates with whom, and how “talk of the town” is being socially mediated.

In this paradigm, we don’t just see each other; we see each other seeing each other. That is the “social” in social media.

On this basis, Twitter allowed the town square model to scale cognitively and practically. Then, on X, the model changed. The shared public square dissolved into personalised optimisation streams.

At the heart of Twitter was the social graph — who followed whom. You could dip into a feed to explore content from other sources, but the core was content you had consciously opted-in to see.

X replaced that model with a behavioural prediction engine.

On Twitter, reading something meant someone chose it.

On X, reading something means something chose you.

Under Twitter, information carried social provenance.

Under X, provenance is replaced by probabilistic inference.

The problem is familiar to anyone who has attended a public event, and the loudspeakers suddenly feed back into the stage microphone, in a runaway amplification loop. What the system puts out as an algorithmically selected feed affects what goes in on the next iteration.

The probabilistic model shapes the distribution of future inputs.

That changed distribution shapes the outcome.

The changed outcome validates the prediction.

It amplifies the behaviours it predicts, and then mistakes the amplified result for validation.

A tweet on Twitter was a direct signal to others; a post on X is more like a bid in an eBay-style attention auction. The “winner” in any presentation slot is whoever triggers the greatest predicted engagement score in an opaque and shifting probabilistic ranking game.

There is no need for any malicious suppression or covert agenda.

Feedback loops and optimisation dynamics are enough to explain the screeching.

In the Twitter model, there was no effort to optimise at the local post level. No one message into the digital ether had to “carry the load” of justifying itself in isolation. Our signal could aim for truth, depth, irony, coherence, and subtlety — prioritising long-term audience value over immediate rewards.

Announcing the loss of a loved one might not generate engagement; the “heart” button may feel inappropriate as a marker, as there is not much to like. The intangible reaction was 100% downstream of the tweet itself. All of the measurable proxy behaviours — clicks, replies, likes, reposts, dwells, plays, opens — downstream of that.

What X tries to do is take those downstream proxies, and move them upstream — pre-selecting content that triggers engagement to have more visibility.

X does not amplify signal. It amplifies what its proxies can measure.

But here’s the catch. Under X’s telos — its implicit ultimate direction — signal that cannot be proxied by the algorithm becomes systematically disadvantaged.

What this results in is a kind of sugar-and-caffeine maxxing.

Not poison.

Not evil.

Just a system optimised for stimulation rather than nourishment.

In this case sugar is instant engagement. Caffeine is stimulation and urgency. Whereas nutrition is meaningful signal.

A platform optimised for immediate content consumption

will inevitably select for sugar and caffeine over nourishment.

The result is a strange kind of instability. Just like someone with the coffee jitters, X is all spikes, bursts, and resets. Trends churn and disappear in an instant. Meanwhile, you show a tentative interest in some hobby subject, and it floods your feed.

This isn’t just memory loss. It is a collapse of temporal coherence, as the network can no longer sustain long-duration social narratives. Everything becomes reaction and velocity. The statistically optimised feed converges toward sameness.

The loss of hashtags loses us connection to the recent past. Everything is ephemeral as we moved from indexed memory to probabilistic resurfacing. Content is no longer stored socially and navigated semantically. It is surfaced transiently, based on predicted relevance.

You could call this “algorithmic presentism” — a kind of temporal distortion. Our very sense of time and place is being warped by the construction of a perpetual synthetic “here and now”. There is no town square; the town is now a mirage.

This is not merely a technical change in distribution mechanics. The issue isn’t just epistemic — a shift in what is considered relevant. It is deeper than that — it is teleological.

In other words, this is not a gripe about the algorithm measuring value badly. It is a struggle with the platform trying to achieve something entirely different to what it used to aim for.

In the Twitter days, the implied telos was human association, relationship, and expression.

Whereas in the new X telos, the system optimises for maximum measurable engagement and attention allocation.

The platform didn’t merely change how signal was measured.

It changed what the platform is for.

Human emotional reactivity became the raw material of the platform. This paradigm shift has the consequence of inverting the social contract.

Twitter allowed a form of digital citizenship. We were members of communities with relatively stable audiences, continuity, and social context.

On X, our “audience” is no longer a stable community of followers. It is the temporary output of a prediction system.

As a result, we stopped being members of a community and became competitors in a reaction economy.

Users still pour their life force into creating content, engaging in replies, and building networks of association. But the supports for the “town square” model have eroded and collapsed:

Continuity is disappearing. Most posts go nowhere, a few “ignite” — resulting in a skewed sense of who is present and loss of situational awareness.

Followers have weakened. Numbers have become stagnant; there is little meaning in following. The feedback loop of contribution and progress has broken. Yet no new success proxy metric has emerged.

Reach became conditional. I notice this with my “photo walks”, where repeated posts of images that algorithm cannot evaluate, designed to make sense as a series of posts, are suppressed.

On Twitter, silence could mean respect or uncertainty.

On X, silence is pathologised. Non-reaction is a proxy for failure, not contemplation.

Good human discourse tolerates uncertainty, irony, and incompleteness.

Reaction systems do not.

They reward immediate legibility, emotional clarity, and decisive polarity.

So the platform selects against ambiguity itself.

Beauty, truth, and depth are only weakly legible to the machine.

Legibility to AI replaces meaning as the criterion for visibility.

Sarcasm is no longer content.

Hashtags are no longer content.

Meaning itself is no longer content.

Only reaction is content.

The system no longer asks, “Is this meaningful?”

It asks, “Will this produce measurable response?”

The obligations of users stayed the same.

The obligations of the platform changed.

These have not been reconciled.

The result of a broken social contract is a feeling of betrayal. Visibility, reputations, and livelihoods are tied to outcomes that are increasingly decoupled from effort and merit. The replacement of human selection (with noise) by algorithm (seeking to eliminate noise) puts creators at the mercy of an ever-shifting opaque prediction systems.

The resulting unpredictability leaves a kind of “gaslit” feeling.

You turn up, do your day’s work online, but the rewards don’t accumulate.

Philosophy, art, theology are all downplayed. Outrage, controversy, pathos are up-rated. The end game is an incentive collapse: those content forms that are no longer algorithm-friendly depart the platform, cementing the belief they were of no value in the first place.

Humans adapt themselves to what the system rewards. But what it rewards is no longer aligned to what I have to say. The environment is increasingly hostile to contemplative processing of tentative ideas. The platform maintains the illusion of the old value system, while substituting a new one.

It is unclear whether human-defined signal can survive industrial-scale optimisation.

X is no longer a social network. It has instead become an AI-mediated attention allocation system with social features. Human behaviour became both the product and the training data.

If an idea doesn’t perform according to the values of reaction media, it effectively disappears — no matter what its social importance.

I feel a kind of frustration and sadness using X. My own work requires me to explore the world and identify ideas worth aggregating and relaying. At the risk of being a hypocrite, most of my own content intake is algorithmically curated.

I don’t plan to leave X, and don’t condemn it as evil. There is nothing necessarily wrong with X. It is coherent engineering pursuing coherent goals.

But those goals are no longer aligned with the values many users thought they were participating in.

The deeper problem is not that the algorithm is bad.

It is that we mistook an optimisation system for a social space.

And now that the optimisation has become visible, the illusion is collapsing.

The interface still resembles a social network.

The underlying reality no longer is one.

As I said, you should not just read this.

You should decide what is worth reading for yourself.

Consciousness is still the mover behind all platforms. Increase the conscious awareness and the platforms respond accordingly. That means that the current social media atmosphere is exactly what we need to keep increasing consciousness. We are only run by robots if that's what we believe.