The thermodynamics of propaganda

What the “chans” and Wikipedia reveal about the limits of narrative control

Why do obviously false narratives keep winning — even when everyone knows they’re false?

In the last few weeks I’ve had a step-change in how I understand information warfare, and how systems like the mass media co-exist with power. The shift is subtle but decisive. It is away from treating truth as something that directly determines outcomes, and toward asking a different question:

Which “truth” can actually support collective action when pressure forces decisions before certainty is available?

Systemic stress rises due to challenge or crisis. Under these conditions, people and institutions do not act on what is absolutely true, but instead act on what can be sustained in the moment. So when “hard truth” cannot carry the load of being the “official truth”, something else must — and that “something else” is what we usually call propaganda.

Truth still matters — but as a constraint, not a driver. When load is applied by questioning authority, governance systems do not act on what is true in the abstract. They act on what can be stabilised socially and institutionally.

Because facts only matter once they are accepted as authoritative, the problem shifts from truth itself to how truth is attributed. This moves the focus from establishing facts to attaching attribution for those facts:

what distinguishes truth from “truth”,

and who has the power to decide where that distinction is drawn.

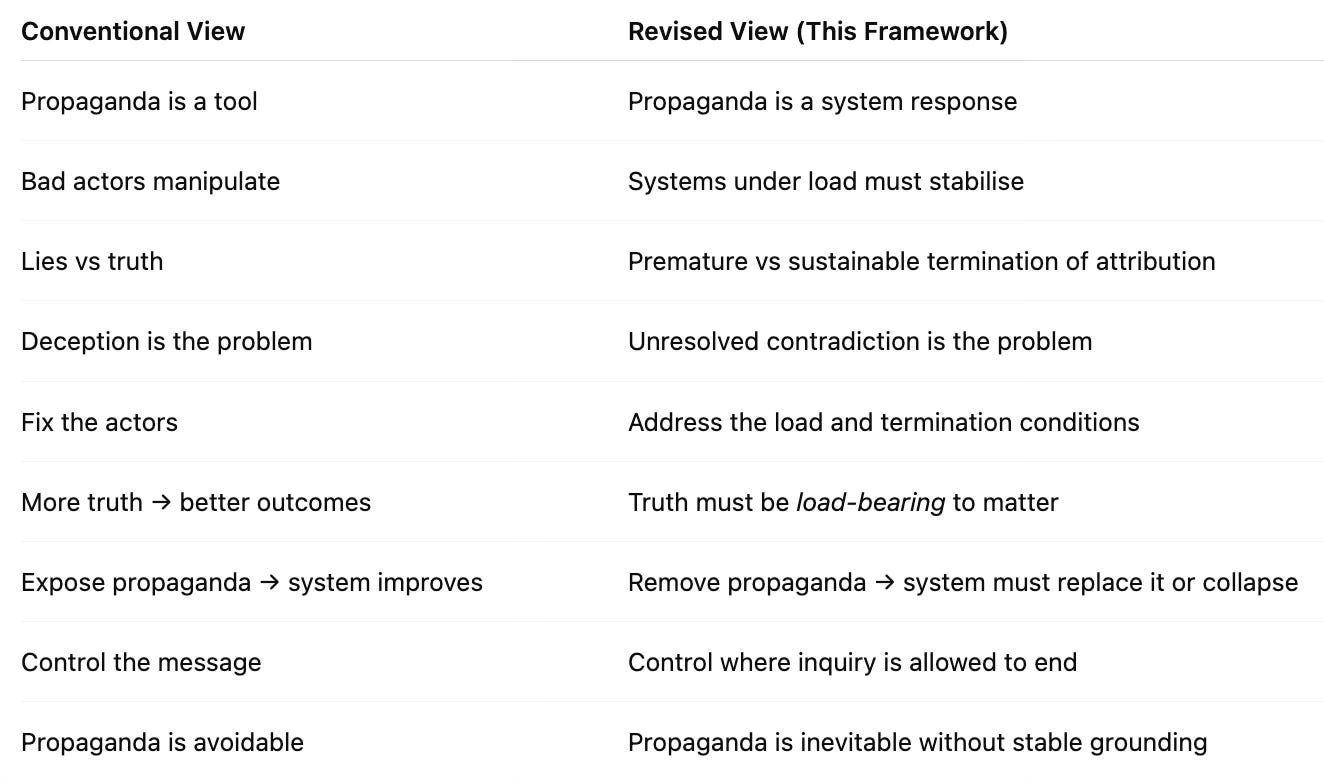

The theory I am developing is complementary to existing work on propaganda and narrative control. Classical approaches, such as those of Jacques Ellul and Noam Chomsky, analyse how beliefs are formed and shaped within populations. They are rich, detailed, and psychologically grounded. What I am exploring is orthogonal to that: a thinner, more abstract layer that defines the constraints under which any system of belief, authority, and action must operate.

This layer cuts across domains — law, media, politics, open-source intelligence, even personal relationships and addiction. It does not explain specific narratives or events. Instead, it defines the conditions under which they can exist. While incentives, power, and coordination determine which narratives are selected, this model explains why any narrative must be selected at all under load.

A useful analogy is the difference between meteorology and physics. Meteorology studies the weather — the patterns, systems, and dynamics we observe. Physics constrains what kinds of weather are possible in the first place. The argument here is that information systems have both. Much of what we call propaganda belongs to the “weather”. But beneath it lies a deeper structure that explains why propaganda is so persistent, and why attempts to eliminate it so often fail.

The “aha!” — that propaganda isn’t the enemy but the symptom — did not arrive fully formed. It emerged as I began comparing different kinds of information environments, and how they behave under pressure.

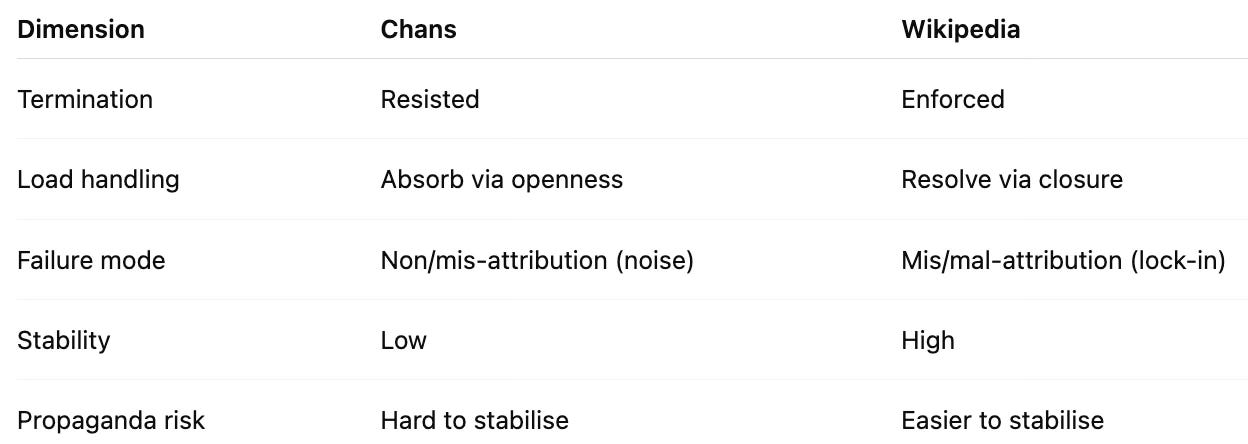

This becomes clearer if we look at two seemingly opposite systems:

The chans — 4chan, 8chan, and 8kun — chaotic, anonymous bulletin boards with minimal constraint beyond the law, where questions are not allowed to settle without evidence.

Wikipedia — a totem of authority in the digital age — operating very differently from traditional models like Encyclopaedia Britannica, enforcing early closure of questioning so that shared understanding can stabilise.

Most analyses of these platforms focus on their relationship to truth — whether they produce it, distort it, or undermine it. That is not the question I am asking. What matters here is something more structural: how claims to truth are grounded, challenged, and ultimately resolved.

In other words, the key difference is not what is said about the truth, but where and how inquiry into a claim is allowed to terminate.

I have produced two works over the last few years that aggregate the core thesis of what is going on — Open Your Mind to Change as the “big picture” of the information war, and On Q as the specifics of how distributed, leaderless resistance to narrative control is designed and deployed.

Looking back at these essay collections up to around 2020, the core hypothesis and structure hold. What was missing was an understanding of the structural inevitability of what is happening, and how entities like the chans and Wikipedia fit into that framework.

Rather than truth being its own public good (or harm), the conflict is better understood in terms of the distinct failure modes of how attribution is terminated:

Non-attribution → e.g. rumours on social media with no source

Misattribution → e.g. mainstream reporting that gets a story wrong but sticks

Malattribution → e.g. deliberate intelligence ops or propaganda campaigns

To define these more rigorously:

Non-attribution → no grounding (confusion, fog), where claims circulate without evidence — a kind of societal gossip

Misattribution → wrong grounding (error, incompetence), where claims fail under scrutiny but persist through institutional reinforcement

Malattribution → deliberately false grounding (weaponised narrative), where misleading claims are actively constructed and propagated

Like most people at the time, I assumed the battle was over facts. That turns out to be too narrow. Facts constrain what can happen, but they do not determine what action is taken. The real contest is not over facts, but over meaning — and who has the power to decide when that meaning is settled.

To understand how attribution actually resolves — whether it converges on truth or one of its failure modes — we have to look at what happens under load. Attribution does not occur in a vacuum. It is always subject to pressure, and that pressure determines where inquiry is terminated.

The most obvious source is adversarial: actors who seek to mislead or control inject false narratives by design. But this is only part of the picture. There are also constraints on time, attention, and emotion, as well as institutional limits — what sources are considered acceptable, what questions can be asked, and what conclusions can be expressed without consequence.

Despite this, decisions still have to be made — “take the jab or not”. Questions cannot be held open indefinitely. Where load is low, or grounding is strong, systems can tolerate prolonged open inquiry. Even then, at some point, someone or something must terminate attribution — whether or not the question has actually been resolved.

This allows us to reframe systems like the chans and Wikipedia. They are not simply competing sources of truth. They are different architectural strategies for terminating attribution under load. The question shifts from “what is true?” to “where, and how, is truth allowed to terminate?”

That contrast turns out to be revealing — and it leads directly to a deeper understanding of the nature and role of propaganda. To see it clearly, we need to examine each environment in turn.

The chans — 4chan, 8chan, and 8kun — are routinely described as chaotic, hostile, and epistemically toxic: anonymous, unmoderated, breeding grounds for conspiracy, trolling, and extremism. That description is accurate as far as it goes. Yet it misses their deeper structural function.

The defining feature of the chans is not the content they host, but how they handle attribution. Where most systems rush to stabilise claims under pressure, the chans systematically refuse premature termination.

Attribution under pressure

When load is applied — adversarial attacks, emotional urgency, institutional demands — most environments are forced to close questions fast. This is precisely where misattribution and malattribution take root: claims are accepted not because they are adequately grounded, but because they are sufficiently socially acceptable for action to proceed.

The chans operate in the opposite direction; social acceptability is repudiated. Their culture insists on grounding (“sauce?”) and subjects every claim to immediate, often brutal scrutiny. Authority shortcuts are deliberately disabled: anonymity strips away identity as a source of legitimacy, while ephemerality prevents reputational capital from accumulating. Each claim must stand — or fall — on its own.

The cost of refusal

This resistance to closure carries clear costs. The chans rarely converge on stable truth. Instead, they generate high volumes of non-attribution: noise, speculation, half-formed ideas. Threads fragment, competing narratives proliferate, and consensus remains elusive. From the outside, this looks like pure dysfunction. Structurally, it is the price of keeping attribution open under sustained pressure. This does not imply the chans produce reliable truth. They can amplify falsehood as easily as expose it. Their function is structural: to resist premature closure, not to guarantee correctness.

Adversarial epistemology

More importantly, the chans do not merely tolerate challenge — they institutionalise it. Trolling, ridicule, and deliberate deception are not bugs; they are features of the environment. Participants must operate in a setting where misleading claims are expected and must be actively detected and dismantled.

This produces a form of adversarial epistemology: a claim survives not because it feels plausible or aligns with priors, but because it withstands relentless attack. “Autists” are the user community with the weakest attachment to prior beliefs, and hence the greatest adversarial testing pressure.

This does more than filter claims. It trains participants to operate under adversarial conditions — where deception is assumed, and attribution must be actively defended. The result is not consensus, but capability.

Resistance to malattribution

In such an environment, deliberately constructed false narratives (malattribution) struggle to stabilise. False claims still appear, but they lack protective layers — no institutional authority, no persistent narrative scaffolding, no reputational shield. They are continuously exposed and usually shredded.

The chans therefore default to openness rather than forced closure. Uncertainty is preserved, often at the expense of coherence or usability.

What the chans actually are

It is a mistake to view the chans as producers of truth — or even as reliable sources of information. They are neither; they can structurally succeed without delivering truth. Rather, they function as a mechanism that actively resists the conditions under which propaganda can take root and stabilise.

They achieve this by refusing to let attribution terminate easily, even when the pressure to do so is intense.

The limit of the model

This strength is also their fundamental limitation. Because the chans resist termination so effectively, they cannot reliably bear load, i.e. produce a definitive answer to anything. They cannot coordinate sustained action, bind decisions to shared conclusions, or convert insight into collective movement. They excel at exposing contradictions and shattering premature closures, but they do not resolve them.

Interim synthesis

The chans therefore occupy a distinct ecological niche in the information system. They are not a substitute for institutional authority, but a persistent counter-pressure against its abuse. They keep attribution open longer than most systems can tolerate — particularly against malattribution — while accepting the resulting instability as the necessary trade-off.

At the opposite extreme sits Wikipedia. It presents itself as open, collaborative, and neutral, yet operates as a highly structured mechanism for stabilising claims. Where the chans resist closure, Wikipedia is explicitly designed to bring disputes to an end.

The key, once again, is not what is said, but how attribution is handled. Wikipedia’s defining feature is its architecture for terminating attribution in a consistent, repeatable way.

Attribution under pressure

Under load, systems must act. Where the chans absorb pressure by keeping questions open, Wikipedia resolves it by closing them. This is accomplished through policy, a strict hierarchy of sources, and editorial consensus.

Attribution is channelled through “reliable sources,” which serve as authorised endpoints for inquiry. Once a claim is backed by such sources, it is treated as sufficiently grounded for inclusion. Competing claims — even if true — gain little traction without this backing.

The mechanism of closure

Wikipedia does not claim to determine truth directly. Instead, it determines which sources are admissible, and therefore where attribution is allowed to terminate. The operative question shifts from “Is this true?” to “Is this supported by recognised authority?”

Editorial consensus then provides the final stabiliser. Disputes are not left open indefinitely; they are resolved through processes that prioritise convergence over prolonged uncertainty.

The benefit of enforced termination

This architecture delivers stability. Articles converge on coherent narratives, contradictions are minimised, and users receive a consistent view of events. Non-attribution is effectively suppressed: claims lacking acceptable grounding are excluded.

From a user perspective, the result is highly functional. Decisions can be made, shared understanding becomes possible, and coordination follows.

The cost of closure

Yet enforced termination carries its own failure modes. Misattribution can become locked in when incorrect claims are repeatedly reinforced by “reliable sources.” More critically, malattribution can stabilise when the underlying authority itself is captured or constrained.

Because Wikipedia terminates attribution relatively early, it limits opportunities for adversarial challenge. Once a narrative is established, dislodging it — even with new evidence — becomes difficult.

Protection of narrative stability

Unlike the chans, where claims face continuous attack, Wikipedia shields established narratives through policy enforcement and editorial control. Participation is structured, and deviation from accepted standards can result in exclusion.

This does not eliminate error or manipulation; it relocates them. Failures move from open contest into the structure of authority itself.

What Wikipedia actually is

It is a mistake to view Wikipedia simply as a neutral repository of knowledge, or merely as a vector for propaganda. Structurally, it functions as a system that converts contested claims into stable narratives by enforcing the termination of attribution.

It does this by deciding where inquiry may end — and ensuring that it ends there consistently.

The limit of the model

This strength has a corresponding vulnerability. Because Wikipedia depends on early, stable termination, it is fragile when the authorities it relies upon are incomplete, biased, or under pressure. In such cases, the system continues to operate — but around a distorted map of reality.

It can carry load, but not necessarily truth.

Interim synthesis

Wikipedia therefore occupies the complementary position to the chans. Where the chans resist closure and preserve uncertainty, Wikipedia enforces closure and produces stability. Both systems respond to the same underlying constraint: the need to terminate attribution under load.

One fails by never fully resolving the question. The other succeeds by resolving it — but risks doing so too soon.

The crucial point is not that the chans are better at producing truth. By design, they cannot produce stable, shared conclusions at all. There is no canonical “chan answer” to anything, because the system refuses to terminate attribution in a way that can carry load. Uncertainty is preserved at the cost of stability.

By contrast, Wikipedia’s perceived bias is not primarily a failure of its editors or founders, but a structural consequence of how it terminates attribution. Systems that rely on authoritative sources and consensus must converge on narratives that can be stabilised under pressure. Coherence is preserved at the risk of locking in error.

This creates a selection effect. Ideas that are simple, institutionally supported, and easy to enforce as consensus become natural termination points. More complex, adversarial, or structurally disruptive claims struggle to stabilise, regardless of their truth.

Termination is therefore a selection process: information governance systems do not converge on truth; they converge on what can be stabilised under load.

What appears as ideological bias is therefore, at least in part, an emergent property of the system. It is the price paid for being able to terminate attribution and carry load at scale. These are not edge cases, but opposite extremes — and both exhibit the same constraint.

This leads us to a Law of Narrative Thermodynamics:

Truth does not determine what wins.

Stability under load does.

Which in turn brings us to a deeper reframing of propaganda itself — shifting it from a “weather” phenomenon to a matter of underlying “physics”. This is not a descriptive theory of behaviour, but a constraint model. Like Arrow’s theorem in voting systems, it does not predict outcomes — it defines what cannot be avoided.

To restate the contrast: the chans refuse to terminate attribution, at the cost of being unable to bear significant social load, while Wikipedia enforces termination, at the price of constraining what can stabilise as accepted knowledge. These are polar extremes — and that invites a natural question:

what happens when a decision system must act, but cannot afford either?

This leads to the core insight:

Systems cannot operate indefinitely without terminating attribution.

They cannot always terminate it correctly.

Therefore, they must terminate it anyway.

This is analogous to a “pressure, temperature, volume” relationship — but for information. You may like or dislike the outcome; it is not a matter of preference. There is no moral weight to the constraint. It is structural.

Systems like the chans cannot carry sustained social load because they refuse closure. Systems like Wikipedia can carry that load only by enforcing closure — and therefore will tend toward narratives that are socially stabilisable. This is not a failure of design or intent, but an inevitability of how attribution must terminate under pressure.

From this perspective, propaganda is no longer an aberration.

It is what always emerges in the space where attribution must be terminated,

but truth has not been fully resolved.

This takes us to a thermodynamic model of information in contested environments. This is not a claim of physical equivalence, but of structural analogy. As with thermodynamics, the model does not describe specific outcomes, but constrains what kinds of outcomes are possible under load.

The analogy is direct:

“Heat” enters the system as attribution load — the pressure of conflict. But the conflict is not over what is true in the abstract, but over what is accepted as authoritative truth.

As load increases, so does internal energy: tension rises around where attribution is terminated, and contradictions with observed reality accumulate as attribution degrades.

Propaganda acts as the dissipation mechanism, converting that excess energy into entropy in a way that allows the system to continue operating. When it is absent, it must be generated.

Propaganda does not eliminate contradiction — whether between sources or with lived experience. It releases it into the environment, making the contradiction survivable. It does not primarily change what people believe; it changes how contradiction is handled so that action remains possible.

In this sense, propaganda is not fundamentally about persuasion. It is about managing contradiction so that systems can continue to function.

Which leads to a disconcerting conclusion:

The problem is not that governance systems lie

— it’s that they cannot function without doing so.

Propaganda is not a failure of design, but a response to a structural constraint. Until we can replace its function, systems will continue to manufacture it when attribution must terminate.

While propaganda may be a structural necessity, it does not absolve those who deploy it. The challenge is not to eliminate propaganda, but to design systems that can terminate attribution without perpetuating harm. Accountability still lies with those who design and manipulate the narratives within these constraints.

In other words, governance systems do not fail because they are wrong. They fail because they must decide in the short run before they can know the truth, generating unbearable contradiction in the long run. This is why exposing propaganda does not fix the system. It removes a load-bearing mechanism, and the system must replace it. Indeed, the greater the pressure on a system, the more propaganda it requires to function.

The reason is simple. Propaganda is not just persuasion; it is part of how the system stabilises itself under pressure. Remove it without replacing its function, and the system does not correct — it compensates, fragments, or escalates.

This pattern appears across domains — from COVID to war, environmental crises to political ideologies. It is not simply a matter of bad actors or moral failure. It is the physics of information under load.

This also explains something that conventional analyses of propaganda struggle to resolve. If propaganda were simply deception, exposing it would weaken the system. In practice, the opposite often occurs: contradictions accumulate, narratives harden, and enforcement intensifies.

Exposure does not simply weaken propaganda; it increases the load on the system. In environments where institutional grounding is weak, exposure increases the need for propaganda. However, in settings with stronger alternative grounding mechanisms (e.g., evidence-based decision-making or transparency), exposure can lead to a weakening of narrative control.

From this perspective, the behaviour is no longer paradoxical. What is being exposed is not merely falsehood, but a load-bearing mechanism. Remove it without replacement, and the system does not correct — it destabilises, adapts, or doubles down.

This brings us back to the two environments we have examined. The chans provide continuous adversarial pressure, preventing falsehood from settling by refusing premature termination. Wikipedia provides the opposite function, absorbing that pressure by enforcing termination so that shared understanding — and action — can occur.

While the chans and Wikipedia represent polar extremes, hybrid systems — such as Reddit or StackExchange — blend aspects of both. These systems balance adversarial challenge with authoritative termination, but still face the same fundamental problem: how to manage load without compromising truth or stability.

Which leaves us with an uncomfortable conclusion. If propaganda emerges in the gap between unresolved truth and necessary action, then eliminating it is not a matter of exposure alone. It requires a system that can both withstand adversarial challenge and still terminate attribution in a way that can carry load.

The question is no longer how to eliminate propaganda — but what could possibly replace it. Until we answer that, propaganda remains as inevitable as heat in an engine under load.

Really interesting, Martin. Of course in Wikipedia types of environments, this means that deliberate attempts to malignly manipulate others is an entry that helps stabilize the system even as it destabilizes the larger system of the human community. There might be that a look into whether a system being analyzed is open or closed (as is important in thermodynamics) could affect how well or poorly manipulation and propaganda get handled or can propagate. The Chans seem to me more like a closed system in that the community is pretty much self-contained to its participants, while Wikipedia is meant to be an open system within the larger human community it is aiming to reach. Does the concept of "open/closed systems" have an impact here or is it in effect already contained in your analysis above?

I've been keeping an eye out for news about Grokipedia, as I think it may be a more useful middle ground between your two extremes, but it really depends on what you are setting forth here: Is it putting into place a system of rules or code or whatever which can reliably hold open the need to settle questions that are still actually open while reliably closing issues that are truly testable and known. Like false positive and false negatives in testing fields.

I think Musk and crew will be much more likely to want to hold open true discussion on non-settled issues. I'm wondering, Martin, do you know anything about how Grokipedia works, what its current rules are and whether you think those rules (or processes or whatever) can remain stable under load of public use when it comes out of its hothouse development phase? I think their rule structure might turn out to be quite interesting.

And here come the Data Centers. Boon or bane?