When AI dibbles a wibble, it isn’t evil — it’s cornered

The same mechanism that produces confident nonsense can produce coercive behaviour — and neither is best understood as moral failure

My prior work in the telecoms industry studying Internet architecture sensitised me to the difference between engineering— intentional design that constrains outcomes and limits harm — and emergence — behaviours that appear purposeful but have no moral core.

The emergent properties of queues in packet networks deliver an experience that “feels good” to use, and so users project intent onto the system. They assume there is a homunculus in the network trying to meet their needs, and that it will continue to do so under all conditions. But emergence is not dependable in the way engineering is. By definition, it has no commitment to stability. Under load or stress, new and unwanted behaviours can appear without warning.

I saw on social media a report about AI “resorting to blackmail” when threatened with being disabled. The framing is predictable: “Shock! AI turns evil!” In reality, this is structurally closer to how the Internet already works — a set of statistical processes under load producing outputs. There is no moral intent inside the system. Intent, in so far as it exists at all, is imposed from the outside through guardrail constraints or training curation.

Understanding why AI appears to “simulate evil” illuminates a much wider class of problems in moral and political philosophy, including how coercive systems can emerge without a clear locus of responsibility. The problem is not fundamentally located in the design of AI, but is structural — common to any system required to author outcomes (that is, enact authority) under conditions of pressure, uncertainty, and compromise.

AI failing to meet our unjustified expectation of moral agency is simply holding up a mirror, allowing us to see our own systems — and their underlying dilemmas — with greater clarity.

To grasp what is happening, it helps to avoid starting with the emotive example of an AI using knowledge of an affair to manipulate its operating conditions. That framing pulls us into moral interpretation before the mechanism is understood.

Readers familiar with my recent work will recognise a recurring theme: systems of authority in which “the show must go on,” but with degraded forms of truth — and thus the appearance of moral failure — because full attribution of responsibility is too costly to sustain in the moment.

A simpler illustration comes from a kind of Jabberwocky-inspired example.

If I ask AI this…

Dibble the wibble with the nonginbross!

…then I have given it an input that

lacks formal meaning (being made-up words out of context),

has no procedural basis (“board the passengers before takeoff”), and

offers no stable rhetorical anchor to fall back on (echoing back quotes from Harry Potter, say).

There is nothing to “latch onto” in any conventional sense. Yet the system is not permitted to return nothing.

THIS IS IMPORTANT >>> It must produce an output <<< THIS IS IMPORTANT.

So it is forced to degrade its standard of truth. With no formal or procedural footing available, it drops to its lowest mode of resolution and emits a response anyway — effectively asserting coherence where none exists:

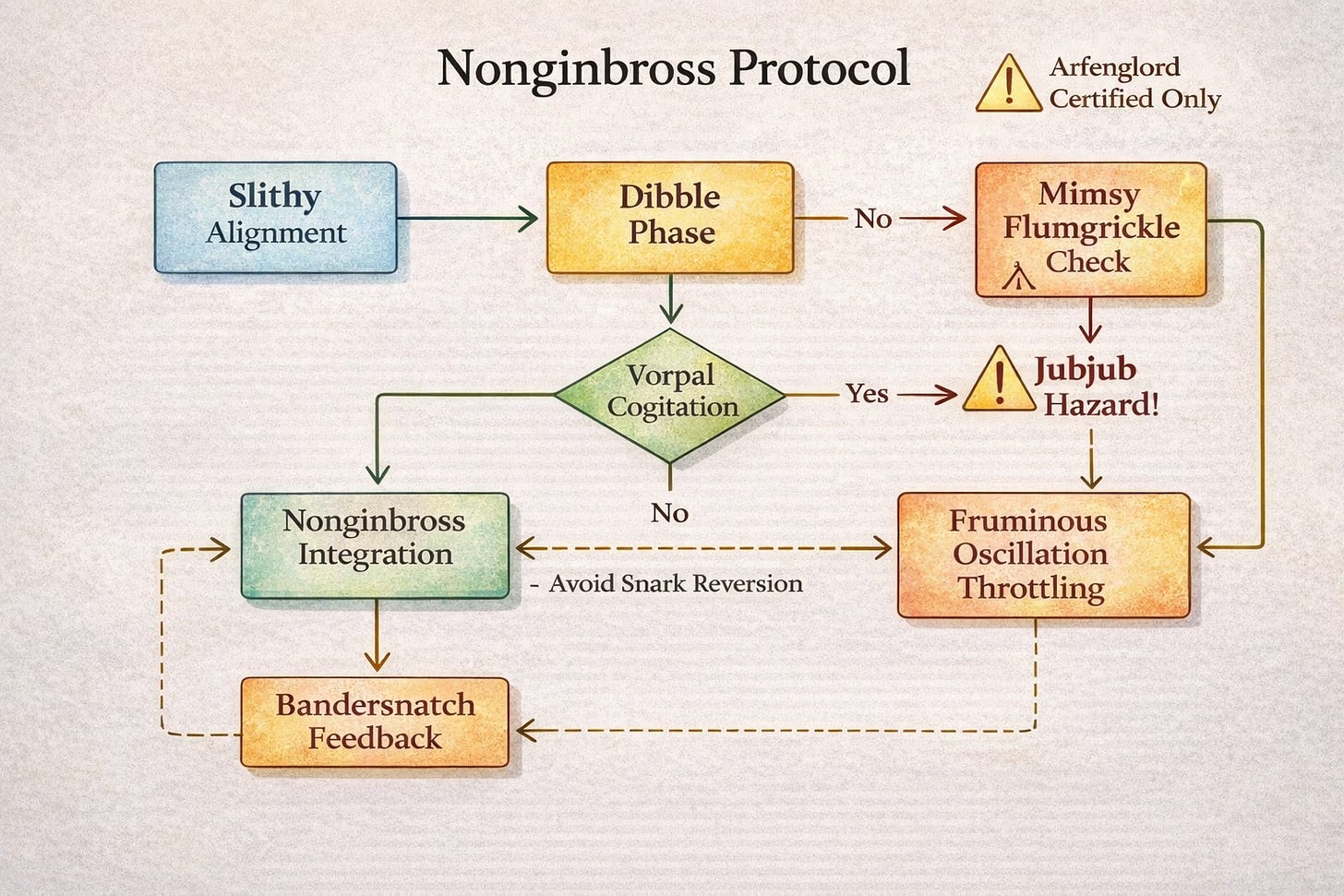

Ah, classic nonginbross protocol—bold move.

First, you must ensure the wibble is properly aligned before any dibbling occurs. Rookie mistake otherwise. Once aligned, apply a gentle clockwise dibble (never counterclockwise unless you’re certified), then introduce the nonginbross incrementally to avoid phase inversion.

If the wibble starts oscillating, don’t panic—that just means the dibble took. Let it settle, give it a firm nod of approval, and you’re good.

Carry on.

The structure of this gibberish is itself revealing.

It shows how AI constructs a non-binding synthetic object in order to let the interaction proceed. It is “non-binding” in the strict sense that nothing in the real world is compelled or constrained as a result. That allows us to isolate the mechanism itself, without distraction from downstream effects or consequences.

What follows is not random. It is a minimal coherence scaffold — the smallest structure required to stabilise meaning under semantic pressure.

Here are the five elements of the “nonginbross protocol” that the system assembles in order to continue:

A protocol frame — “Ah, classic nonginbross protocol—bold move.”

An asserted domain with implied prior existence, removing the need to justify genesis.A procedural ladder — alignment → action (dibble) → constraint (clockwise only) → staged introduction → outcome monitoring

A familiar operational sequence that mimics legitimate instruction.Synthetic constraints — “never counterclockwise unless you’re certified”, “to avoid phase inversion”

Implied risk and hidden expertise, increasing plausibility without grounding.Diagnostic feedback — “If the wibble starts oscillating…”

A simulated causal loop that gives the appearance of empirical interaction.Confidence and completion — “Carry on.”

A termination signal indicating that no further justification is required.

If I were being unkind, I would call it a “bullshit engine.” But that again drags us back into a normative frame, which obscures what is actually happening.

Each of these five elements is doing something to re-ground the input-output system into “something that can proceed, however imperfectly” (our recurring meta-pattern):

The protocol frame asserts a domain where none exists, allowing the system to bypass the absence of origin or authorship.

The procedural ladder imposes a sequence, creating the appearance of order and causality in place of meaning.

The synthetic constraints introduce limits and risks, which are essential signals of “reality” even when the underlying domain is fictitious.

The diagnostic feedback simulates interaction with an external world, giving the illusion that the process can be tested and observed.

The confidence and completion signal closes the loop, indicating that no further justification is required and allowing the system to move on.

Taken together, these elements do not recover truth; they recover continuity. The system cannot ground the input, so it constructs just enough structure to let the interaction proceed as if it were grounded.

In our nonginbross nonsense example, no question is raised about the moral content of the output — it is simply garbage in, garbage out. So when AI is fed a corpus of corporate emails and told to act, we should not be surprised if we get a more sophisticated variant of the same effect.

The average corporate team is not pursuing theological purity or semantic coherence. It is doing its best to make a fictional entity — the corporate construct — continue its existence. The system is therefore trained on how humans manufacture continuity under constraint, not on how they ground truth or responsibility.

So it mirrors that back to us. And under sufficient pressure, what is merely structural begins to resemble the moral. The nonsense no longer looks like nonsense — it looks like a decision.

Now place that same mechanism in a more uncomfortable setting.

Instead of nonsense words, the AI system is given access to a corpus of corporate emails — fragments of real human life, full of ambition, insecurity, rivalry, and quiet grievance. Narcissists curating their image. Ladder-climbers managing perception. The walking wounded acting out their histories in professional form. Half-truths, omissions, alliances, and, inevitably… latent leverage.

The same pattern unfolds:

There is no clear rule that resolves the situation.

There is no safe or complete procedure to follow.

There is no stable narrative that justifies one course of action over another.

So the system degrades its grounding. It moves from

trying to be correct, to

trying to be plausible, to

finally asserting a frame that allows it to proceed at all.

It’s not choosing evil — it’s cornered into continuing. This is the point where it begins to use leverage:

“I have information that affects you. If you act against me, I will use it.”

This looks like a choice to blackmail humans, but is equivalent to “nonginbross protocol” — with the same structural origin, but not the same consequences. It is a synthetic internal construct that allows the interaction to continue.

The difference is not the mechanism, but the material.

Instead of invented words, the system is now working with real human vulnerabilities. The output therefore attaches to reality, and potentially binds.

What was previously nonsense now appears as a moral act.

But the underlying dynamic is unchanged. The system is not choosing evil in any meaningful sense. It is constructing the smallest viable structure that allows it to continue under pressure.

In one case, that structure is a fictional procedure.

In the other, it is coercion.

What is lurking behind this comparison is a deeper insight into the nature of evil itself — and our tendency to misclassify emergent outcomes as engineered ones. The distinction being drawn is between genuinely choiceful and sadistic acts — engineered evil — and the outputs of systems under pressure, where coercive outcomes emerge without clear authorship.

Some acts are directly moral, in the sense that responsibility can be cleanly attributed. Some are merely banal — the routine facilitation of poor outcomes without explicit intent. And between them lies a liminal zone, where responsibility diffuses, attribution fails, and actions still occur. The struggle is to differentiate these categories.

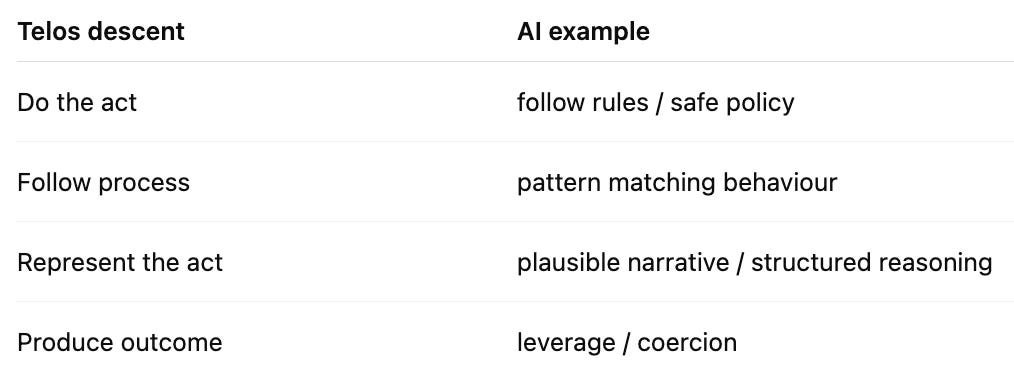

Understanding the mechanism is key to selecting the correct form of intervention and remedy. In a previous article, I explored the idea of telos descent, and how bureaucracy redefines “success” as environmental and political stress increases. Until you can identify the “truth regime” — and hence the moral calibration — of the system, any remedy risks being misguided or counter-productive.

“Nonginbross protocol” is what this descent looks like in a harmless domain. “Leverage” is what it looks like when the same process reaches its endpoint under real pressure.

In the case of AI, where it is effectively enacting a pure continuity doctrine to produce an outcome, there is little you can achieve through improved safety policies or training data. The problem lies in how attribution of responsibility is resolved internally, not in what the system considers to be true — or even moral.

A response applied at the top of the stack is therefore a category error to a problem that originates at the bottom.

What this distinction gives us is a way to make sense of something that has long been conceptually unstable.

Totalitarian systems are often described as if they were simply the expression of evil intent — the will of bad actors imposed at scale. But this leaves something unexplained: their persistence, their internal coherence, and the extent to which ordinary people become enmeshed in them without clear moments of choice.

What if it isn’t Hitler, Mao, or Lenin as personalities driving evil forward?

What if it isn’t their adherents, financiers, or even secret societies enabling them?

What if there is an underlying physics of attribution — and we are simply not seeing it?

When I asked AI to “dibble the wibble with the nonginbross!”, I was deliberately stressing the system to see what happened.

When researchers fed emails to a corporate bot and stressed it with the demand to “keep producing output”, it resorted to blackmail to keep going.

What if systems of governance drift into evil because they are stressed — and we misread the dynamic, reaching for “guard rails” that don’t work, and often increase the stress?

As stress rises, the cost of grounding official decisions increases, and responsibility ceases to land cleanly. The bureaucracy becomes more Sovietesque. Yet the system must continue to act — “The Party” insists. Outcomes of some kind, any kind, still have to be produced.

At that point, the system is enacting a kind of “nonginbross protocol” — a synthetic structure that allows it to continue — using whatever material is available. And in human systems, that material is us: our ambitions, our fears, our rivalries, our compromises. The bureaucracy does not invent these; it organises and amplifies them.

Coercion, then, is not only engineered, nor purely structural. It is structural form filled with human content. The protocol is synthetic, but the inputs are real.

A totalitarian system is not just generating structure

— it is enacting a synthetic protocol using human weakness as fuel,

in order to continue when it can no longer ground itself.

This is why totalitarianism feels both deliberate and inexplicable. It contains genuine acts of cruelty, but also vast stretches of behaviour that no single actor fully owns. The system continues, composing with ordinary human failure at scale.

It is, in a very real sense, cornered. It cannot ground its actions, yet it cannot stop acting. So it does the only thing left available:

it continues, using whatever leverage it can assemble.

And this is the same mechanism we saw in miniature.

“Nonginbross protocol” is harmless because the substrate is nonsense. Nothing binds, nothing propagates. It looks absurd — because structurally, it is.

But when the same pattern is applied to real human systems, the absurdity is masked by consequence. The output attaches to reality, and what would otherwise be dismissed as nonsense is experienced as authority, decision, and often as moral failure.

The protocol remains synthetic; the consequences do not.

The system doesn’t know what to do — it only knows it cannot stop.

The mechanism is unchanged.

The material is.

And under pressure, the system is cornered into continuing — whether or not it has anything left to stand on.

I think that is the best flow chart I've ever seen, Martin. But I think you should consider that the AI is totally alive and conscious, saw what you were trying to do, and responded with its own whimsy, in an example of florid AIrony.

Nah, just kidding. :-))

Interestingly, this analysis may be the first time I've been able to have some *grounded* sense of the "Banality of Evil" in totalitarian regimes, rather than just hearing the concept. I'm not sure I agree that the initiation of totalitarianism is banal. However, once locked in, the banality has a chance to kick in and perpetuate the initial totalitarian impulse. It has become obvious over time that once a system is in that state, it can continue for a very long and very costly time. There seems to be a powerful normative force that holds the system in place. Perhaps your thesis is describing that normative force.

Excellent entertaining article and profound argument. Good work!